1. Introduction

Hello! I’m Manjunatha D S, a Software Development Engineer in Test (SDET) at Money Forward, working on automation and test framework integration across multiple HR products.

In my previous blog, I wrote about how we scaled API test automation across multiple products using a shared framework approach. That article focused on how embedding API tests inside each product repository helped standardize structure and collaboration across teams.

But today, I want to step back and answer a more fundamental question: Why did we build Lager in the first place?

Before Lager, we had unit tests and well-defined manual E2E tests, but the API layer where most business logic lives lacked structured automated validation and was mostly tested manually in shared environments.

That created predictable friction:

- API bugs surfaced only during staging verification

- Developers debugged issues after deployment, not during development

- Manual validation slowed iteration

- Tests depended on complex, unreliable shared test data

The gap wasn’t tooling – it was feedback timing.

That led us to a deeper question: Where should API tests actually run if we want fast, reliable feedback?

If shift-left testing is the goal, API validation should happen as close as possible to where code is written. That realization led us to design Lager – an API testing framework built on TypeScript, Playwright, and Cucumber.js with one guiding principle: make API testing as easy as running npm test, so developers can validate behavior during development and catch bugs when they are cheapest to fix.

Only after establishing that foundation did we scale it across multiple backend products.

In this blog, I’ll explain the problems that led us to build Lager, the principles behind its design, and what we learned while adopting it.

2. The Cost and Tradeoffs of Where API Tests Run

Modern backend systems rely heavily on CI pipelines and shared staging environments. While these are essential for quality control, they are not always the best place to detect API issues.

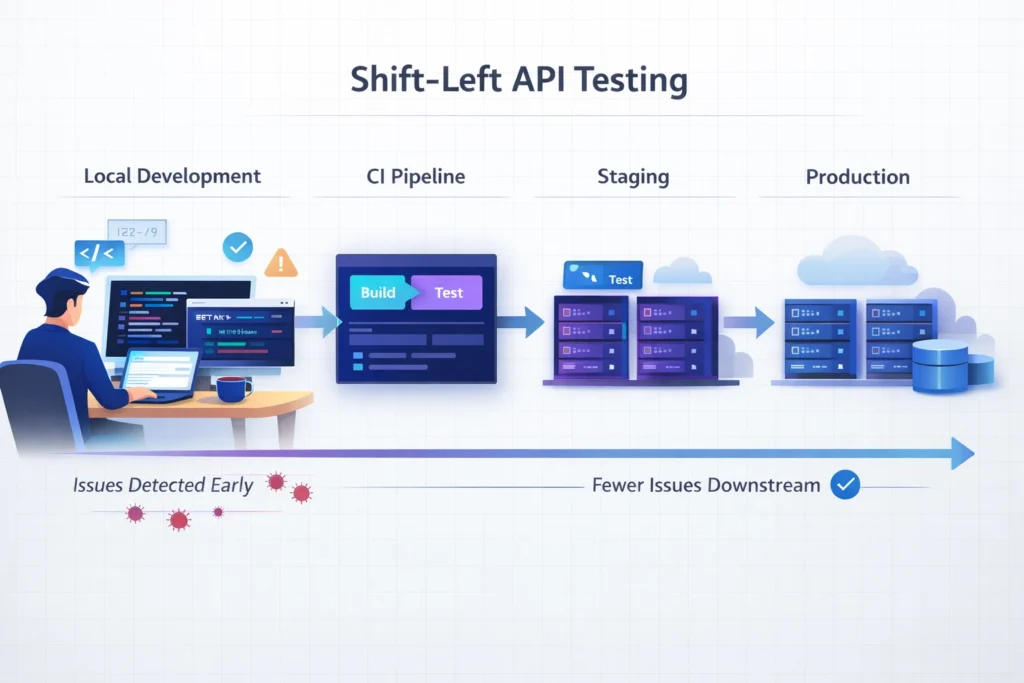

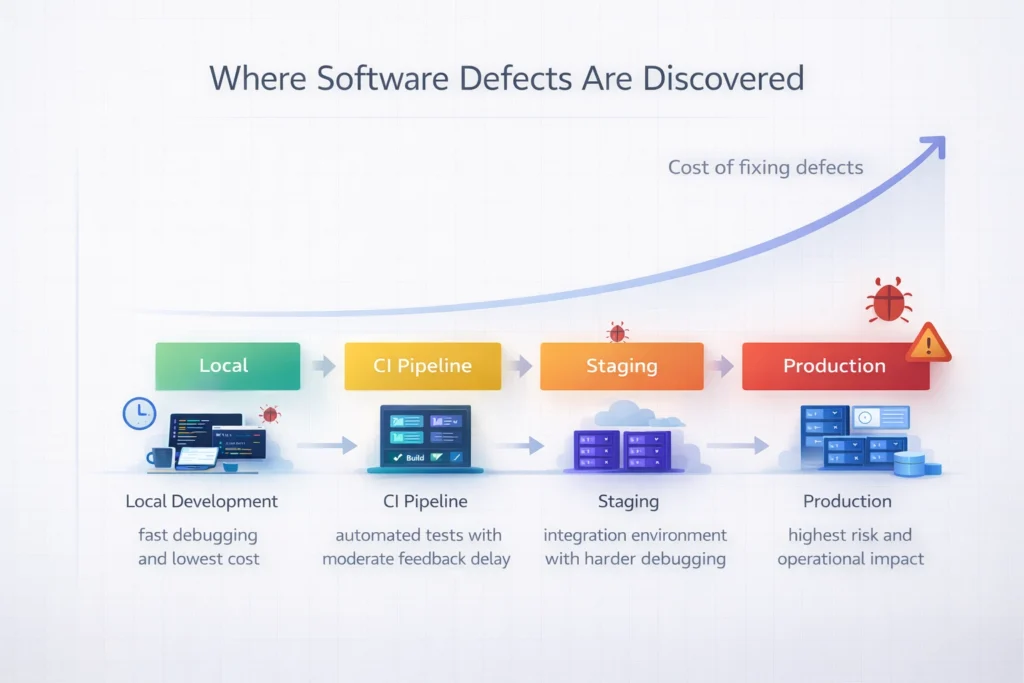

Consider the typical failure discovery timeline: Local → CI → Staging → Production

Visual Overview: Failure Discovery Timeline

Cost and debugging effort increase as failures move downstream from local development to production environments.

Local Failure

- Discovered within minutes with full debugging context

- No pipeline noise

- No team coordination required

This is the cheapest and fastest feedback loop.

CI Failure

- Discovered after pipeline execution, often 15-40 minutes later

- Requires re-run and context reload

- Blocks pull requests

CI improves consistency, but failures are still detected only after code is pushed.

Staging Failure

- Often discovered during integration across services

- Shared data and deployments introduce instability and coordination overhead

- Root cause isolation becomes significantly harder

At this point, the cost is no longer just time – it’s friction.

Production Failure

- Business impact

- Incident management

- Customer trust at risk

The further a defect travels, the more expensive it becomes to resolve, including intangible costs such as reputation damage.

Even when testing is automated, late discovery remains costly. Developers switch context. Pipelines become noisy. Debugging becomes reactive instead of proactive.

To clarify our thinking, we evaluated the strengths and weaknesses of each testing layer.

| Environment | Strengths | Limitations |

|---|---|---|

| Local | Fast feedback, low cost, fully debuggable | Hard to standardize, auth complexity, frequent re-run on changes |

| CI | Automated, reproducible, merge gating | Slower feedback, pipeline overhead |

| Staging | Real integrations, production-like | Shared instability, coordination required |

The insight was simple:

- API tests should be easy to run locally so developers can validate behavior while implementing changes.

- CI then serves as the reliable source of truth, confirming results in a clean, reproducible environment.

Once we accepted that principle, one thing became clear: local API testing sounds ideal. The question was how to make it practical.

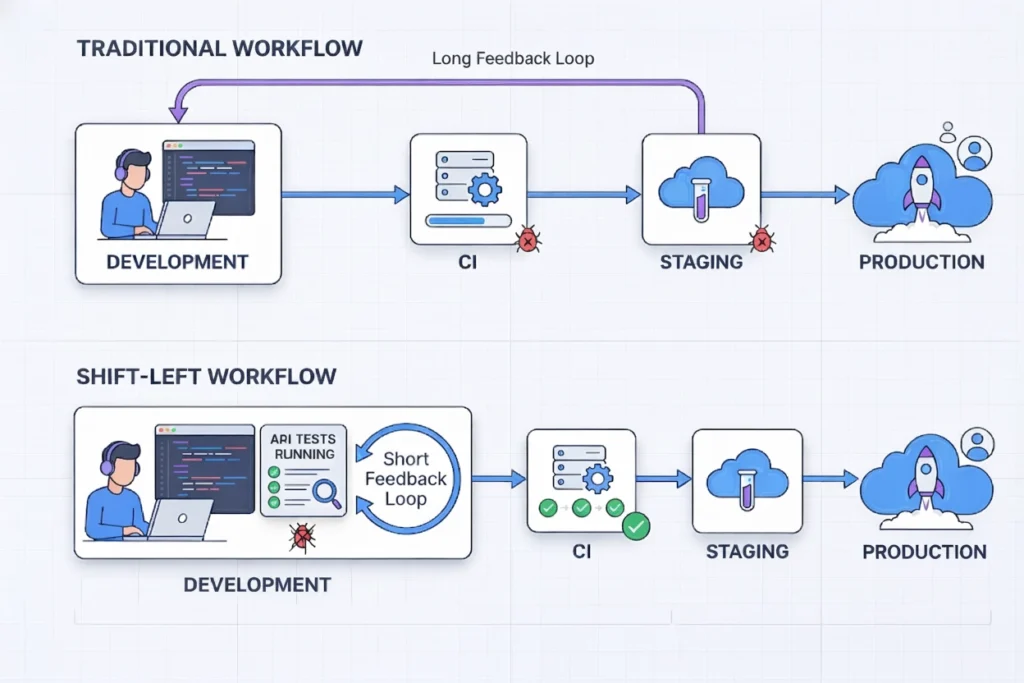

3. Why Shift-Left Matters (Beyond Cost)

Cost reduction is one benefit of early testing, but it’s not the most important one. The real value of shift-left testing is how it changes behavior.

Visual Overview: Shift-Left Feedback Loop

Running API tests during development shortens feedback loops and makes quality part of everyday implementation.

Developer Ownership

When developers can validate API behavior locally before pushing code, quality becomes part of development rather than an external gate enforced by CI or QA.

Testing stops being something that “happens later” and becomes something built alongside implementation. Quality becomes a shared responsibility across the team, rather than something owned by a single role.

Faster Iteration Cycles

Immediate feedback enables smaller, safer changes. Instead of waiting for pipeline results, developers can validate assumptions in minutes. This short feedback loop encourages experimentation and continuous improvement.

Better API Design

Writing and executing API tests early forces clarity.

Unclear contracts, inconsistent validation rules, and missing edge cases surface immediately, before they solidify into technical debt. Early testing improves not just correctness, but design quality.

Reduced Cognitive Load

Debugging in CI requires reconstructing context:

- What changed?

- What state was the system in?

- Is this environment-related?

Debugging locally happens in the same environment where the code was written. The mental model is still fresh, and feedback is immediate.

Shift-left is not just a technical strategy – it is a cultural shift toward building quality into the first commit, rather than inspecting it after the fact.

4. The Industry Pattern We Wanted to Improve

In many enterprise environments, testing strategies tend to emphasize:

- Heavy CI-driven validation

- Centralized pipelines

- Process-heavy workflows

- Tool-first, developer-second setups

These systems are powerful and scalable. They enforce consistency and protect production systems. But they can also introduce friction:

- Developers wait for pipelines to validate even basic API logic.

- Testing becomes “something CI does,” not “something I verify.”

- Local environments become underutilized for automation.

This over-reliance on downstream validation creates bottlenecks – a pitfall highlighted in Martin Fowler’s Test Pyramid principles and Jez Humble’s Continuous Delivery practices. DORA’s research, summarized in Accelerate (Forsgren, Humble, Kim), also shows that shorter feedback loops strongly correlate with elite software delivery performance. Waiting on CI for basic correctness actively works against that speed.

We wanted something different. We wanted to align with Microsoft’s shift-left guidance, moving validation to the earliest possible point in the development lifecycle.

We wanted a framework that is:

- Developer-friendly, minimizing context switching between programming languages

- Easy to write and maintain, even without deep testing expertise

- Lightweight, with minimal setup overhead

- Local-friendly, while integrating smoothly with CI/CD pipelines

- Scalable across multiple products

CI should remain a safeguard. But feedback must arrive earlier.

That vision led us to design Lager, a framework built to make API testing practical across development and CI environments.

5. Designing Lager

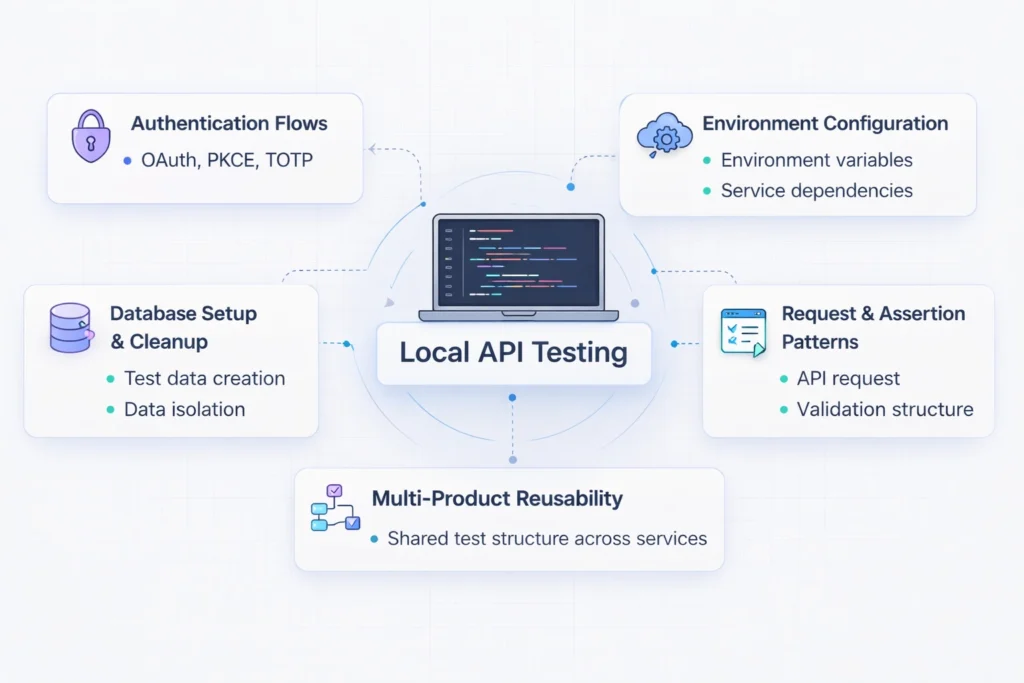

Once we agreed that API validation should begin earlier, the next challenge was clear: how do we make API testing practical during development?

The idea of “run tests locally” sounds simple. In reality, it involves solving several non-trivial problems:

- Authentication flows (OAuth, PKCE, TOTP)

- Environment configuration drift

- Database setup, cleanup and schema changes

- Consistent request and assertion patterns

- Reusable structure across multiple backend products

Visual Overview: Designing Lager for Practical API Testing

Running API tests locally depends on multiple infrastructure components working together.

Design Principles

Before writing a single line of framework code, we defined a few guiding principles:

1. Runs in Local / Docker Environments

Tests should run locally with minimal setup, either directly on a developer machine or through Docker.

If running API tests requires complex manual configuration, developers simply won’t do it.

2. Developer-Friendly

The framework should:

- Reduce boilerplate and hide infrastructure complexity

- Expose simple, readable test definitions

- Avoid context switching

- Easy to learn, even without deep testing expertise

Developers should describe behavior, not manage HTTP plumbing.

3. Scalable Across Products

The solution should work for:

- REST APIs

- GraphQL APIs

- Different authentication strategies

- Different database configurations

All without fragmenting into separate per-project implementations.

6. Introducing Lager

Lager is a lightweight API testing framework designed to provide fast feedback while running consistently across development and CI environments. It does not replace CI automation. It strengthens it by ensuring tests are reliable before they reach the pipeline.

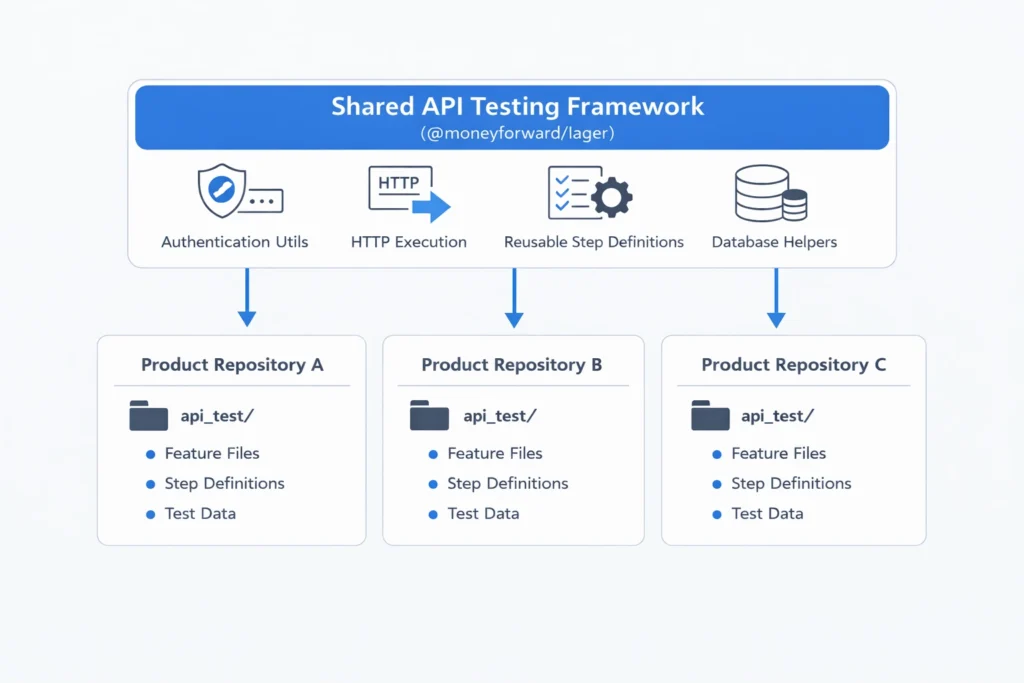

Architecture: A Two-Layer Model

To support this workflow, Lager is structured around a simple two-layer architecture.

Visual Overview: Lager Two-Layer Architecture

Lager separates shared testing infrastructure from product-level API tests.

Layer 1: Shared Framework (@moneyforward/lager)

Provides:

- Authentication utilities (Basic, OAuth + TOTP)

- HTTP execution via Playwright’s

APIRequestContext - Reusable Cucumber step definitions and assertion helpers

- Database integration via Knex and structured logging

This layer centralizes infrastructure concerns so individual products do not need to reimplement these capabilities. It reduces code duplication across repositories and makes the framework easier to maintain and evolve. Updates to the framework become immediately available to every integrated repository.

Layer 2: Product-Level api_test/ Folder

Each backend repository contains its own:

- Gherkin feature files

- Product-specific step extensions

- Test data definitions

Tests live alongside the code they validate. That ensures:

- Reuse without rigidity

- Local ownership without duplication

- Scalability without fragmentation

The entire design centers on one idea: simplifying the path between writing API code and validating its behavior.

If running API tests feels as natural as running unit tests, developers will do it. That was the bar. And that is how Lager was designed.

Example: A Simple Lager API Test

Feature: Create Employee

Scenario: Employee record is persisted after creation

Given a valid authentication token

When I send POST /employees with valid payload

Then the response status should be 201

And the database should contain the new employee record

No HTTP client setup. No token management. No assertion boilerplate. Just behavior.

7. Adopting Lager Across Products

After building Lager, we introduced it across several backend repositories, including:

- Attendance API

- Connected DB backend

- HR Common Master backend

Each product maintains an api_test/ directory directly inside its repository.

This approach ensures:

- Tests live next to the API implementation

- Developers can execute tests locally before pushing

- CI reuses the same test commands

The workflow remains consistent across products, even when the underlying architectures differ.

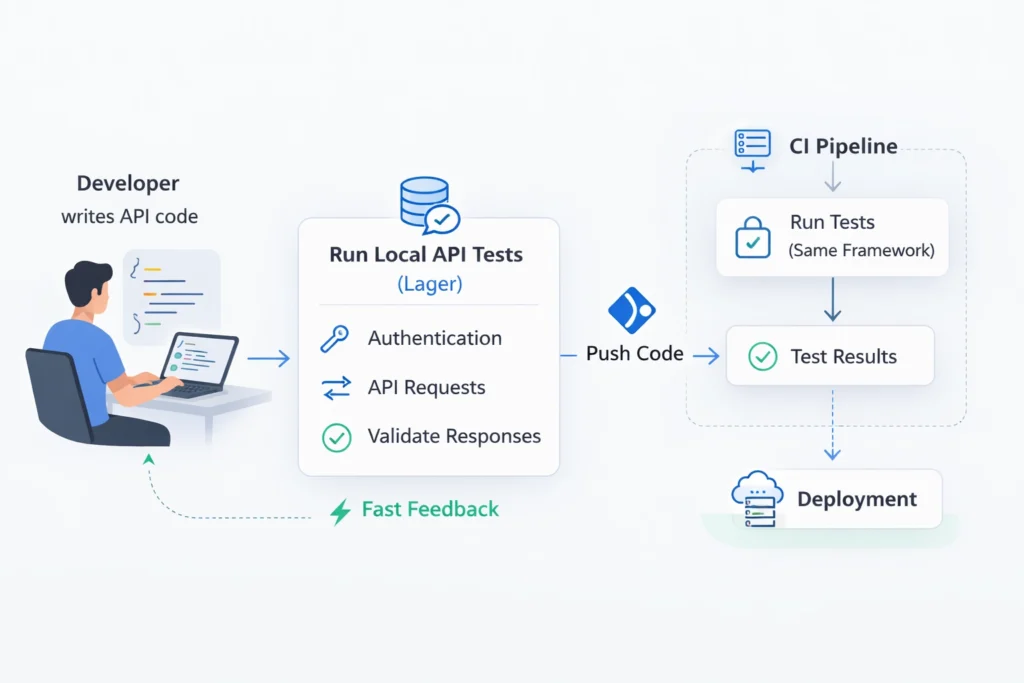

A Typical Development Flow

With Lager in place, the development cycle changed.

Visual Overview: Development Workflow with Lager

API tests run locally during development and continue through the CI pipeline.

A developer implementing a new endpoint can:

- Start the backend locally

- Run the API test suite

- Validate authentication, response structure, and business logic

- Push the change with confidence

Instead of discovering failures in staging after days of development, issues are caught within minutes either locally or in CI, while the implementation context is still fresh.

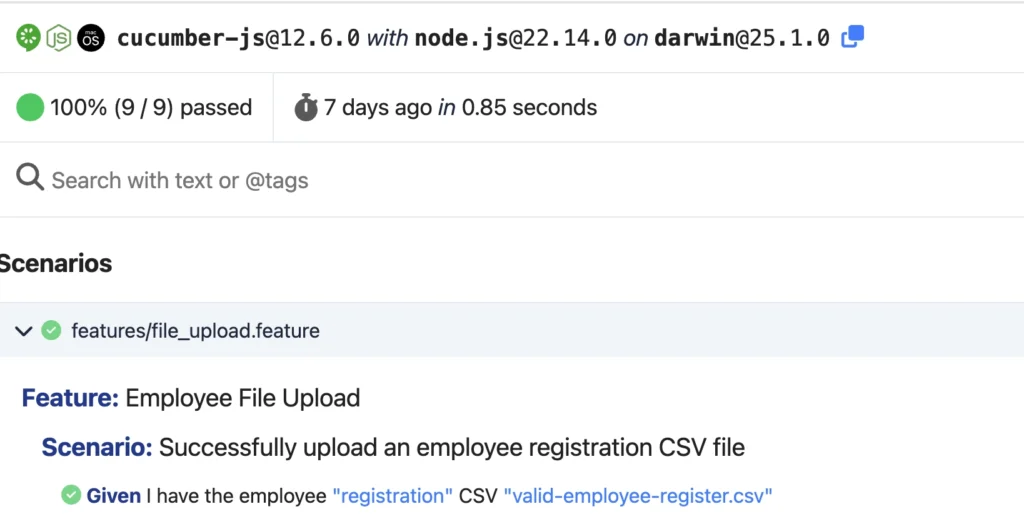

Observed Impact

Across the repositories where Lager has been adopted, we observed several practical improvements.

- CI-only failures became less frequent

- Smoke coverage expanded across critical API endpoints

- Debugging cycles became noticeably faster

- Developers contributed more actively to API tests because Gherkin let them write scenarios in plain English

- API regressions were detected earlier in the development cycle

Visual Overview: Cucumber Report

The biggest improvement wasn’t technical – it was behavioral. Developers began treating API tests as part of implementation, not post-implementation validation.

Testing shifted from post-validation to built-in validation, and the cultural shift was the real success.

8. Challenges We Faced

Building a local-friendly framework was not trivial. While Lager improved feedback loops and developer ownership, we encountered real challenges along the way.

Local Environment Standardization

Different products had different setup requirements – databases, service dependencies, and configuration variables. Maintaining local development environments outside Docker often led to the classic “it works on my machine” problem, which consumed significant time just to reproduce a consistent setup from scratch.

We also observed inconsistencies across products, such as different flows for similar actions, different solutions to similar problems, and inconsistent configuration values.

We addressed this by:

- Creating shared configuration utilities

- Documenting minimal local bootstrap steps

However, further improvements are still possible at the product and development workflow level.

Authentication Complexity

Managing authentication tokens and session flows locally required careful handling.

We introduced:

- Reusable authentication utilities

- Token caching mechanisms

Test Data & DB Initialization

Managing test data and ensuring a consistent database state before each test run was error-prone and slow.

We introduced:

- Lager CLI with recursive DB dumps and foreign-key traversal

- Snapshot-based test data restoration for reliable, repeatable test runs

Local vs CI Parity

Ensuring that local tests behave identically in CI requires consistent configuration management.

We solved this by:

- Avoiding CI-only logic

- Ensuring environment variables are clearly defined

Adoption Curve

Developers were initially more accustomed to discovering test failures during CI execution rather than during local development.

Training sessions, documentation, and early SDET support helped teams build confidence in running tests locally.

These challenges ultimately strengthened the framework and reinforced a key principle: Fast and reliable API testing requires not only infrastructure, but discipline and consistency across teams.

9. Future Plans

While Lager has significantly improved API testing across our backend systems, it continues to evolve. Several areas remain priorities for further improvement.

AI-Assisted Test Generation

We are exploring ways to leverage AI to:

- Suggest test cases from API definitions

- Generate structured test inputs

- Automatically identify missing coverage

The goal is not to replace human-written tests, but to reduce manual effort and surface missing scenarios earlier in the development process.

Improved Local Bootstrap and Environment Profiles

Reducing setup friction remains a priority. Planned improvements include:

- Standardized environment setup scripts

- Automated test data seeding

- Structured environment profiles (e.g.,

local,staging,ci) to reduce configuration drift

Local testing should be predictable and easy to initialize.

Expanded Validation Capabilities

We plan to enhance validation depth by introducing:

- Schema validation support

- Stronger negative and edge-case testing patterns

- Improved dynamic data handling and scenario chaining

As APIs evolve, validation must evolve with them.

Performance Optimization

Database-heavy test suites can still be slow when strict data isolation is required.

We continue to optimize cleanup strategies and test data management to balance determinism with execution speed.

Cross-Product Validation

As backend systems grow more interconnected, integration scenarios spanning multiple services become increasingly important.

Extending local testing principles to support controlled cross-product flows is a natural next step.

Lager is not a finished framework – it is a foundation.

Our goal remains consistent: Make API testing fast, reliable, and developer-owned.

10. Conclusion

When we first asked, “Where should API tests actually run?”

The answer seemed simple: as close as possible to where code is written. But making that practical required deliberate design.

Before Lager, API validation largely happened in shared environments, and feedback arrived later than it should have. By shifting API testing left and making local execution practical, we changed not just tooling, but workflow.

Today:

- Tests live where code lives

- Developers validate behavior before pushing

- CI confirms stability and provides fast feedback alongside unit tests

Lager wasn’t built to replace CI or staging – it was built to improve when and how feedback happens. CI automation remains essential. With Lager, the same tests run consistently across development and CI environments. They provide fast feedback similar to unit tests while validating real API behavior. CI confirms correctness in a clean environment, while staging validates full system integrations.

Ultimately, effective API testing isn’t about writing more tests – it’s about running them at the right point in the development lifecycle. By receiving feedback earlier, we didn’t just save time; we built confidence and made quality an inherent part of development rather than an afterthought.

If you are building API automation in your organization, the most important question isn’t, “Do we have enough tests?” It is: “Where do our tests start, and is it early enough?”. Because quality transforms when feedback becomes immediate, and ownership becomes shared.

Acknowledgments

Special thanks to Ruslan Naumenko and Takaaki Iida for their continuous support, technical insights, and thoughtful feedback throughout the development of Lager. Many of the refinements in this framework were shaped through our discussions.